Eldercare will change

Posted by Tal Oron-Gilad in HRI, Human-Robot Interaction, News, Older adults, robotics on April 12, 2020

Here is a link to a short video summary of our work for the SOCRATES EU project. The overarching focus of this project is on Robotics in eldercare. The use-cases have become extremely relevant with the coronavirus outbreak. We often tended to assume that the lack of sufficient professional personnel will be the main reason for implementing and distributing social robots for the older population. Now we see the necessity of robots for maintaining the safety of older adults and avoiding the spread of disease – virus among those who are more vulnerable.

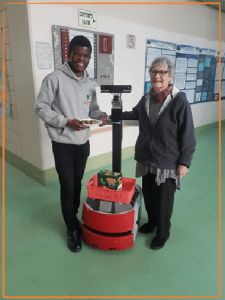

In SOCRATES we (Samuel Olatunji our doctoral student, Yael Edan my colleague and myself) look at the necessary balance between the robot’s level of autonomy (LOA) and the amount and pace of information it should provide (LOT – level of transparency) – so that people will get just the right amount of feedback from the robot (too much may distract them, too little may cause confusion, distrust, and abandonment fo this technology).

Our participants are active older adults who were willing to come to the lab and help us in developing our algorithms and applications. We wish them all well and to stay healthy. We hope to see them all again in the lab when the time comes and it is possible again.

The robot that you see in the film is not teleoperated, it moves autonomously following the user’s path and pace. This is the YouTube link: https://youtu.be/3ruDAcTzPIg

To read more about this work and about Samuel

The effects of road environment complexity and age on pedestrian’s visual attention and crossing behavior

Posted by Tal Oron-Gilad in children, News, pedestrians on January 25, 2020

Work on pedestrian distraction co-authored with Hagai Tapiro and Yisrael Parmet

Abstract

Introduction: Little is known how the characteristics of the environment affect pedestrians’ road crossing behavior. Method: In this work, the effect of typical urban visual clutter created by objects and elements in the road proximity (e.g., billboards) on adults and children (aged 9–13) road crossing behavior was examined in a controlled laboratory environment, utilizing virtual reality scenarios projected on a large dome screen. Results: Divided into three levels of visual load, results showed that high visual load affected children’s and adults’ road crossing behavior and visual attention. The main effect on participants’ crossing decisions was seen in missed crossing opportunities. Children and adults missed more opportunities to cross the road when exposed to more cluttered road environments. An interaction with age was found in the dispersion of the visual attention measure. Children, 9–10 and 11–13 years old, had a wider spread of gazes across the scene when the environment was highly loaded—an effect not seen with adults. However, unexpectedly, no other indication of the deterring effect was found in the current study. Still, according to the results, it is reasonable to assume that busier road environments can be more hazardous to adult and child pedestrians. Practical Applications: In that context, it is important to further investigate the possible distracting effect of causal objects in the road environment on pedestrians, and especially children. This knowledge can help to create better safety guidelines for children and assist urban planners in creating safer urban environments.

I have been busy

Posted by Tal Oron-Gilad in Human-Robot Interaction, News on November 17, 2019

Not very many posts in 2019 but this does not mean that we have not conducted some really interesting research in our lab. On the contrary

So, over the next few weeks I will begin posting some of our most recent accomplishments.

Here is just one:

Closing the feedback loop – the relationship between input and output modalities in HRI, presentation at the Human Friendly Robotics workshop in Rome 2019

ABC student poster- Tamara Markovich and Shanee Honig

Calibrating Adaptable Automation to Individuals

Posted by Tal Oron-Gilad in Affective Design, human factors engineering, levels of automation, News, shared control on June 28, 2018

At last its out in the public. This study co-authored by Jen Thropp, James Szalma and PA Hancock investigates how and if LOA (level of automation) should be calibrated in individuals’ traits (specifically here, attentional control).

to read more click on this link

Abstract:

https://ieeexplore.ieee.org/document/8396314/

Eurohaptics 2018 – Katzman & Oron-Gilad

Posted by Tal Oron-Gilad in News, Tactile & Multimodal displays on June 17, 2018

Towards a Taxonomy of Vibro-Tactile Cues for Operational Missions, a poster presented by Nuphar Katzman

Abstract. The present study is aimed to serve as a preliminary stage in the examination and implementation of a taxonomy of vibro-tactile cues for operational missions. Previous researches showed that using the tactile modality can help increase soldiers’ performance in terms of response time, accuracy in navigation and communication under busy conditions and/or high workload. The experimental pilot reported here focuses on how users (infantry soldiers) perceive tactile cues in terms of implication and urgency during such missions. Fifteen reserve soldiers completed a navigation mission in a virtual environment. During the navigation they received random tactile cues and were asked to assess the suitability of each cue to a specific context. At the end of the session, participants filled a subjective questionnaire about their experience with the tactile cues. Results revealed three (out of five) superior cues, in terms of accurate identification and consistent association. This work provides the foundation to further develop a taxonomy of tactile cues for information types in operational missions. Future work should examine the identification of cues and their associated meanings when the relevant events occur in the simulation and outside in field tests.

Katzman and Oron-Gilad Eurohaptics 2018