Archive for category human factors engineering

The ‘Bot in the box’ Technique for remote HRI studies

Posted by Tal Oron-Gilad in HRI, human factors engineering, robotics on February 22, 2022

Here we describe a new method to conduct studies in HRI that we developed during the COVID-19 lockdowns.

Calibrating Adaptable Automation to Individuals

Posted by Tal Oron-Gilad in Affective Design, human factors engineering, levels of automation, News, shared control on June 28, 2018

At last its out in the public. This study co-authored by Jen Thropp, James Szalma and PA Hancock investigates how and if LOA (level of automation) should be calibrated in individuals’ traits (specifically here, attentional control).

to read more click on this link

Abstract:

https://ieeexplore.ieee.org/document/8396314/

Open positions in Human Factors engineering, Human-robot interaction or Human computer interaction

Posted by Tal Oron-Gilad in HRI, human factors engineering, News on August 12, 2014

BGU is seeking for excellent candidates for senior or junior faculty positions in the Dept. of Industrial Engineering and Management. Candidates will be part of the Human Factors engineering team.

Relevant topics are: HCI, HRI, Usability, HFE, or any affiliated fields.

For more information please contact: Prof. Tal Oron-Gilad at orontal@bgu.ac.il

Visual search strategies of child-pedestrians in road crossing tasks

Posted by Tal Oron-Gilad in children, Dirichlet regression model, human factors engineering, News, pedestrians, Transportation & Safety on October 28, 2013

Hagai Tapiro, Anat Meir, Yisrael Parmet & Tal Oron-Gilad

Presentation at HFES-EU Annual meeting, Torino 2013

Abstract

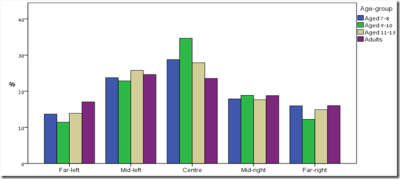

Children are over-represented in road accidents, often due to their limited ability to perform well in road crossing tasks. The present study examined children’s visual search strategies in hazardous road-crossing situations. A sample of 33 young participants (ages 7-13) and 21 adults observed 18 different road-crossing scenarios in a 180° dome shaped mixed reality simulator. Gaze data was collected while participants made the crossing decisions. It was used to characterize their visual scanning strategies. Results showed that age group, limited field of view, and the presence of moving vehicles affect the way pedestrians allocate their attention in the scene. Adults tend to spend relatively more time in further peripheral areas of interest than younger pedestrians do. It was also found that the oldest child age group (11-13) demonstrated more resemblance to the adults in their visual scanning strategy, which can indicate on a learning process that originates from gaining experience and maturation. Characterization of child pedestrian eye movements can be used to determine readiness for independence as pedestrians. The results of this study, emphasize the differences among age groups in terms of visual scanning. This information can contribute to promote awareness and training directions.

Dirichlet regression model and analysis

For each scenario, five areas of interest were defined (as shown in the Figure). The close range central area was defined as the 10 meters of road in each side from the pedestrian’s point of view (AOI 3). Then symmetrically areas to the right of the center and to the left were defined. The medium right/left range (AOIs 2/4) was the part of the road distant at least 10 meter to the right/left of the point of view but less than 100 meters away. The far right/left range (AOIs 1/5) was the part of the road at least 100 meter or more to the right/left of the pedestrian point of view.

Open this link to see a sample video of a scenario as seen by a young pedestrian

Why Dirichlet?

- For each participant and scenario, the total Gaze distribution over the five AOI’s sums up to one.

- Therefore Gaze distribution is compositional data i.e., non-negative proportions with unit-sum.

- These types of data arise whenever we classify objects into disjoint categories and record their resulting relative frequencies, or partition a whole measurement into percentage contributions from its various parts.

- Attempts to apply statistical methods for unconstrained data often lead to inappropriate inference.

- Dirichlet regression suggested by Hijazi and Jernigan (2009) is more suitable for such cases.

How to use?

- The Dirichlet regression model was fitted using DirichletReg package, in R Language. Applying a backward elimination procedure found the best fitting model has three significant main effects.

What did we find?

- The dependent variable was the vector of AOIs and the independent variables were Age-group, POV and FOV; all of them were statistically significant (p <0.05). Predicted means for the percentage of time spent in each AOI for each age group based on the Dirichlet regression model are shown in the following figure and reveal differences among age groups. Note how children aged 9-10 spend more time gazing at the central area, note also the differences between mid-left and mid-right.

Tools and Techniques to support operators in MOMU (Multiple Operator Multiple UAV) environments

Posted by Tal Oron-Gilad in human factors engineering, Military & Law Enforcement Applications, MOMU, robotics, UAV on December 1, 2011

The ‘RICH’ (Rapid Immersion tools/techniques for Coordination and Hand-offs) research project is a US-Israel collaboration. The project aims to research, design and develop tools, techniques and procedures to aid operators in MOMU environments; to facilitate task switching and/or coordinate with other operators all for the benefit of improving overall mission performance.The Israeli partners on this task are Jacob Silbiger from Synergy Integration, Lt. Col. Michal Rottem-Hovev from the IAF, and Drs. Tal Oron-Gilad and Talya Porat from the Dept. of Industrial Engineering and Management. The US parents are Jay Shively, Lisa Fern (Human Systems Integration Group Leader, Aeroflightdynamics Directorate, US Army Research Development and Engineering Command (AMRDEC)), and Dr. Mark Draper (USAFRL). RICH is part of the US/Israel MOA (mutual operation agreement) on Rotorcraft Aeromechanics & Man/Machine Integration Technology.

Here I describe in brief the goals of the Israeli team and some of the tools developed.

Motivation: Multiple operators controlling multiple unmanned aerial vehicles (MOMU) can be an efficient operational setup for reconnaissance and surveillance missions. However, it dictates switching and coordination among operators. Efficient switching is time-critical and cognitively demanding, thus vitally affecting mission accomplishment. As such, tools and techniques (T&Ts) to facilitate switching and coordination among operators are required. Furthermore, development of metrics and test-scenarios becomes essential to evaluate, refine, and adjust T&Ts to the specifics of the operational environment.

Tools: Tools can be divided into two categories: 1) tools that facilitate ‘quick setup’, i.e., aimed to ease the way of the operator into a new mission or area of operation; and 2) tools that facilitate on-going missions where acquiring new UAVs, delegating, or switching is necessary to complete the tasks at hand. The Israeli team focused primarily on tools of the second type. Some “successful” tools have been the Castling rays (see CHI paper for detail), the TIE/coupling tool, and the Maintain coverage area.

Several outcomes of this effort have been presented and appear in the following conference proceedings.

- Tal Oron-Gilad, Talya Porat, Lisa Fern, Mark Draper, Jacon Silbiger, Michal Rottem Hovev and Jay Shively. Tools and Techniques for MOMU (Multiple Operator Multiple UAV) Environments;” , Human Factors and Ergonomics Society’s 55th Annual Meeting.

- Tal Oron-Gilad, Talya Porat, Jacob Silbiger, and Michal Rottem-Hovev. Decision Support Tools and Layouts for MOMU (multiple operator multiple UAV) Environments. ISAP Dayton OH, May 2-May 5, 2011.

- Talya Porat, Tal Oron-Gilad, Jacob Silbiger, and Michal Rottem-Hovev. Switch and Deliver: Decision Support Tools for MOMV (Multiple Operator Multiple Video feed) Environments, COGSIMA, Miami, FL. Feb. 22-24, 2011.

- Porat T., Oron-Gilad T., Silbiger J, and Hovev M. ‘Castling Rays’ a Decision Support Tool for UAV-Switching Tasks. CHI EA ’10 Proceedings of the 28th of the international conference extended abstracts on Human factors in computing systems.