Archive for category Human-Robot Interaction

Workshop:Participatory design (PD) of socially assistive robots (SARs)

Posted by Tal Oron-Gilad in HRI, Human-Robot Interaction, News on December 19, 2022

Last week we organized a hands on workshop in ICSR 2022 utilizing our toolkit

Eldercare will change

Posted by Tal Oron-Gilad in HRI, Human-Robot Interaction, News, Older adults, robotics on April 12, 2020

Here is a link to a short video summary of our work for the SOCRATES EU project. The overarching focus of this project is on Robotics in eldercare. The use-cases have become extremely relevant with the coronavirus outbreak. We often tended to assume that the lack of sufficient professional personnel will be the main reason for implementing and distributing social robots for the older population. Now we see the necessity of robots for maintaining the safety of older adults and avoiding the spread of disease – virus among those who are more vulnerable.

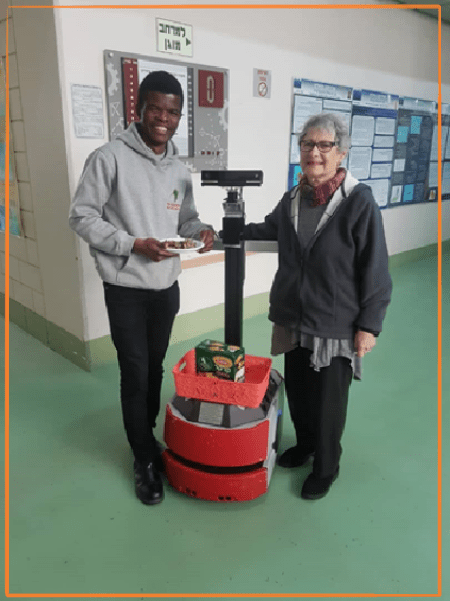

In SOCRATES we (Samuel Olatunji our doctoral student, Yael Edan my colleague and myself) look at the necessary balance between the robot’s level of autonomy (LOA) and the amount and pace of information it should provide (LOT – level of transparency) – so that people will get just the right amount of feedback from the robot (too much may distract them, too little may cause confusion, distrust, and abandonment fo this technology).

Our participants are active older adults who were willing to come to the lab and help us in developing our algorithms and applications. We wish them all well and to stay healthy. We hope to see them all again in the lab when the time comes and it is possible again.

The robot that you see in the film is not teleoperated, it moves autonomously following the user’s path and pace. This is the YouTube link: https://youtu.be/3ruDAcTzPIg

To read more about this work and about Samuel

I have been busy

Posted by Tal Oron-Gilad in Human-Robot Interaction, News on November 17, 2019

Not very many posts in 2019 but this does not mean that we have not conducted some really interesting research in our lab. On the contrary

So, over the next few weeks I will begin posting some of our most recent accomplishments.

Here is just one:

Closing the feedback loop – the relationship between input and output modalities in HRI, presentation at the Human Friendly Robotics workshop in Rome 2019

ABC student poster- Tamara Markovich and Shanee Honig

Understanding and Resolving Failures in Human-Robot Interaction

Posted by Tal Oron-Gilad in HRI, Human-Robot Interaction, News, robotics on June 2, 2018

Shanee Honig and I have just finished a literature review on resolving failures in HRI. The Full publication can be found in Frontiers .

We mapped a taxonomy of failures, separating technical failures from interaction failures [see 1].

After reviewing the cognitive considerations that influence people’s ability to detect and solve robot failures, as well as the literature in failure handling in human-robot interactions, we developed an information processing model called the Robot Failure Human Information Processing (RF-HIP) Model, modeled after Wogalter’s C-HIP (an elaboration of Shannon & Weavers 1948 model of communication), to describe the way people perceive, process, and act on failures in human robot interactions.

- RF-HIP can be used as a tool to systematize the assessment process involved in determining why a particular approach to handling failure is successful or unsuccessful in order to facilitate better design.

abstract

While substantial effort has been invested in making robots more reliable, experience demonstrates that robots operating in unstructured environments are often challenged by frequent failures. Despite this, robots have not yet reached a level of design that allows effective management of faulty or unexpected behavior by untrained users. To understand why this may be the case, an in-depth literature review was done to explore when people perceive and resolve robot failures, how robots communicate failure, how failures influence people’s perceptions and feelings towards robots, and how these effects can be mitigated. 52 studies were identified relating to communicating failures and their causes, the influence of failures on human-robot interaction, and mitigating failures. Since little research has been done on these topics within the Human-Robot Interaction (HRI) community, insights from the fields of human computer interaction (HCI), human factors engineering, cognitive engineering and experimental psychology are presented and discussed. Based on the literature, we developed a model of information processing for robotic failures (Robot Failure Human Information Processing (RF-HIP)), that guides the discussion of our findings. The model describes the way people perceive, process, and act on failures in human robot interaction. The model includes three main parts: (1) communicating failures, (2) perception and comprehension of failures, and (3) solving failures. Each part contains several stages, all influenced by contextual considerations and mitigation strategies. Several gaps in the literature have become evident as a result of this evaluation. More focus has been given to technical failures than interaction failures. Few studies focused on human errors, on communicating failures, or the cognitive, psychological, and social determinants that impact the design of mitigation strategies. By providing the stages of human information processing, RF-HIP can be used as a tool to promote the development of user-centered failure-handling strategies for human-robot interactions.

Towards Socially Aware Person-Following Robots

Posted by Tal Oron-Gilad in HRI, Human-Robot Interaction, News, robotics on May 29, 2018

Here is a new publication from our lab. This is a literature review that is focused on person-following in robotics from the perspective of the user.  Published in IEEE THMS.

Published in IEEE THMS.

Abstract: